PA4: Symbolic Execution on Deep Neural Networks

Goal

In this assignment, you will implement symbolic execution (SE) on a given deep neural network (DNN). SE is a popular and well known testing approach while DNN is an increasingly important and practical application --- this PA will combine those two. You can consider DNN as a specific type of programs and thus you can "execute" it. Symbolic execution would executes the DNN on symbolic inputs and returns symbolic outputs (something similar to pathconditions and local variables that we discuss in class). In addition, we can represent the symbolic outputs as a logical formula and use a constraint solving such as Z3 to check for "assertions", i.e., properties about the DNN.

As with the previous assignment, you can use any language for this assignment, but your program must run on the CSE machine (where I will evaluate your submission). So do not use external libraries or any extra tools etc. You are also strongly encouraged to use Python because Z3 has an easy-to-use API for Python (it also has API for C/C++ but it might be more difficult to use).

For this assignment, you must work alone. However, you are allowed to talk to others (e.g., discussing problems, work on the ideas together), but the implementation and submission must be done individually. If you are stuck, you can post your question as a discussion on Canvas or email me.

Complete Example

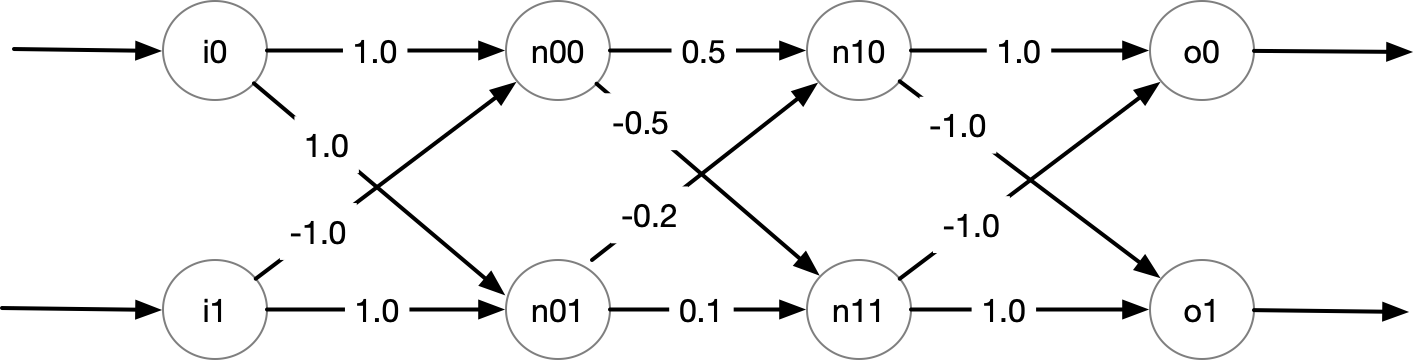

Consider the following DNN with 2 inputs, 2 hidden layers, and 2 outputs.

We can encode this DNN and additional details using Python:

# (weights, bias, use activation function relu or not)

n00 = ([1.0, -1.0], 0.0, True)

n01 = ([1.0, 1.0], 0.0, True)

hidden_layer0 = [n00,n01]

n10 = ([0.5, -0.2], 0.0, True)

n11 = ([-0.5, 0.1], 0.0, True)

hidden_layer1 = [n10, n11]

# don't use relu for outputs

o0 = ([1.0, -1.0], 0.0, False)

o1 = ([-1.0, 1.0], 0.0, False)

output_layer = [o0, o1]

dnn = [hidden_layer0, hidden_layer1, output_layer]

In this DNN, the outputs of the neurons in the hidden layers (prefixed with n) are applied with the relu activation function, but the outputs of the DNN (prefixed with o) are not. These settings are controlled by the True, False parameters as shown above. Also, this example does not use bias, i.e., bias values are all 0.0's as shown. Note that all of these settings are parameterized and I deliberately use this example to show these how these parameters are used (e.g., relu only applies to hidden neurons, but not outputs).

After performing symbolic execution on dnn, we obtain symbolic states, represented by a logical formula relating inputs and outputs.

# my_symbolic_execution is something you implement,

# it returns a single (but large) formula representing the symbolic states.

symbolic_states = my_symbolic_execution(dnn)

...

"done, obtained symbolic states for DNN with 2 inputs, 4 hidden neurons, and 2 outputs in 0.1s"

assert z3.is_expr(symbolic_states) #symbolic_states is a Z3 formula/expression

print(symbolic_states)

# And(n0_0 == If(i0 + -1*i1 <= 0, 0, i0 + -1*i1),

# n0_1 == If(i0 + i1 <= 0, 0, i0 + i1),

# n1_0 ==

# If(1/2*n0_0 + -1/5*n0_1 <= 0, 0, 1/2*n0_0 + -1/5*n0_1),

# n1_1 ==

# If(-1/2*n0_0 + 1/10*n0_1 <= 0, 0, -1/2*n0_0 + 1/10*n0_1),

# o0 == n1_0 + -1*n1_1,

# o1 == -1*n1_0 + n1_1)

We can use a constraint solver such as Z3 to query various things about this DNN from the obtained symbolic states:

-

Generating random inputs and obtain outputs

z3.solve(symbolic_states) [n0_1 = 15/2, o1 = 1/2, o0 = -1/2, i1 = 7/2, n1_1 = 1/2, n1_0 = 0, i0 = 4, n0_0 = 1/2] -

Simultating a concrete execution

i0, i1, n0_0, n0_1, o0, o1 = z3.Reals("i0 i1 n0_0 n0_1 o0 o1")

# finding outputs when inputs are fixed [i0 == 1, i1 == -1]

g = z3.And([i0 == 1.0, i1 == -1.0])

z3.solve(z3.And(symbolic_states, g)) # we get o0, o1 = 1, -1

[n0_1 = 0,

o1 = -1,

o0 = 1,

i1 = -1,

n1_1 = 0,

n1_0 = 1,

i0 = 1,

n0_0 = 2]

- Checking assertions

print("Prove that if (n0_0 > 0.0 and n0_1 <= 0.0) then o0 > o1")

g = z3.Implies(z3.And([n0_0 > 0.0, n0_1 <= 0.0]), o0 > o1)

print(g) # Implies(And(i0 - i1 > 0, i0 + i1 <= 0), o0 > o1)

z3.prove(z3.Implies(symbolic_states, g)) # proved

print("Prove that when (i0 - i1 > 0 and i0 + i1 <= 0), then o0 > o1")

g = z3.Implies(z3.And([i0 - i1 > 0.0, i0 + i1 <= 0.0]), o0 > o1)

print(g) # Implies(And(i0 - i1 > 0, i0 + i1 <= 0), o0 > o1)

z3.prove(z3.Implies(symbolic_states, g))

# proved

print("Disprove that when i0 - i1 >0, then o0 > o1")

g = z3.Implies(i0 - i1 > 0.0, o0 > o1)

print(g) # Implies(And(i0 - i1 > 0, i0 + i1 <= 0), o0 > o1)

z3.prove(z3.Implies(symbolic_states, g))

# counterexample

# [n0_1 = 15/2,

# i1 = 7/2,

# o0 = -1/2,

# o1 = 1/2,

# n1_0 = 0,

# i0 = 4,

# n1_1 = 1/2,

# n0_0 = 1/2]

TIPS

- It is strongly recommend that you do symbolic execution on this DNN example by hand first before attempt any coding. This example is small enough that you can work out step by step. For example, you can do these two steps

- First, obtain the symbolic states by hand (e.g., on a paper) for the given DNN

- Then, put what you have in code but also for the given DNN. Use Z3 format (e.g., you would declare the inputs as symbolic values

i0 = z3.Real("i0"), then compute the neurons and outputs, etc) -

Finally, convert what you have into a general program that would work for any DNN inputs.

-

Two ways of doing symbolic execution

- As mentioned in class, for this assignment, you can follow the traditional SE method which produces the symbolic execution trees with multiple execution paths (recall each condition forks into 2 paths). You can decide whether to do a depth-first search or breadth-first search here (they will have the same results). At the end, the

symbolic statesare a disjunction of the path conditions (i.e.,z3.And[path_conds]). -

But since we are using Z3, a simpler way (which I explained in class), is to encode all those forked paths directly as a formula. For example

if (x + y > 0): r = 3 else: r = 4Using traditional SE, you would have 2 paths, e.g., path 1:

x+y > 0 && r = 3and path 2 :x+y <= 0 && r ==4, from which you take the disjunction and get(x+y > 0 && r == 3) || (x+y <= 0 && r ==4)). But for this assignment, instead of having to fork into two paths, you can use Z3 to encode both branches using theIffunction, e.g.,If(x+y>0, r==3, r==4)orr==If(x+y>0,3,4). The results of these two methods are exactly the same at the end, just that the prior, traditional one you do more work while the later you do less. It is up to you. -

When

biasis none-zero, then it will simply be added to the neuron computation, i.e.,neuron = relu(sum(value_i * weight_i) + bias). For example, ifbiasis0.123then for neuronn0_0we would obtainn0_0 == If(i0 + -1*i1 + 0.123 <= 0, 0, i0 + -1*i1 + 0.123). -

You can use the below method to construct Z3 formula for

relu

@classmethod

def relu(cls, v):

return z3.If(0.0 >= v, 0.0, v)

- To install Z3, you can simply use

pip3

cse fac/tnguyen> pip3 install z3-solver

Defaulting to user installation because normal site-packages is not writeable

Collecting z3-solver

Downloading z3_solver-4.8.9.0-py2.py3-none-manylinux1_x86_64.whl (30.5 MB)

|████████████████████████████████| 30.5 MB 25.6 MB/s

Installing collected packages: z3-solver

Successfully installed z3-solver-4.8.9.0

WARNING: You are using pip version 20.2.1; however, version 20.2.4 is available.

You should consider upgrading via the '/usr/local/python/.pyenv/versions/3.7.2/bin/python3.7 -m pip install --upgrade pip' command.

pyenv: cannot rehash: /usr/local/python/.pyenv/shims isn't writable

cse fac/tnguyen> python

Python 3.7.2 (default, Aug 15 2019, 13:44:58)

[GCC 4.8.5] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import z3

>>> z3.get_version()

(4, 8, 9, 0)

Conventions and Requirements

Your program must have the following (all of these are given in the concrete example above)

- a main function

my_symbolic_execution(dnn)that- takes as input the DNN, whose specifications are given as example above

- returns symbolic states of the DNN represented as a

Z3 expression(a formula) as shown above - this goes without saying but do NOT write this function only to work with the given example (i.e., do not hard-code the weight values etc in your program). This function should work with any DNN input (though it could be slow for big DNN's).

- in your resulting formula, you must name

- the

nthinput asin(e.g., the first input isi0) - the

nthoutput ason(e.g., the second output iso1) - the

jthneuron at theithlayer asni_j. Note that the first layer is the0thlayer

- the

- a testing function

test()where you copy and paste pretty much the complete examples given above to demonstrate that your SE works for the given example. In summary, yourtestwill include- the specification of the 2x2x2x2 DNN in the Figure

- run the symbolic execution on the dnn (as shown above, it should output the dimension of the dnn and runtime)

- output the symbolic states results

- apply Z3 to the symbolic states to obtain

- random inputs and associated outputs

- simulate concrete execution

- checking the 3 assertions as shown

- another testing function

test2()where you create another DNN:- The DNN will have

- the same number of 2 inputs and 2 outputs, but 3 hidden layers where each layer has 4 neurons, i.e., a 2x4x4x4x2 DNN.

- non-0.0 bias values.

- then on this DNN, do every single tasks you did in

test()(run SE on it, output results, apply Z3 etc). You will need to have 2 assertions that are true and 1 assertion that is false (just like above).- Initially you might randomly generate weights and bias values, but it is likley that you will need to manually adjust them so that you can prove some correct assertions (randomly generated values probably will not give you a meaningful DNN with any asserted properties).

- The DNN will have

Important: If you use a language other than Python, then adapt and modify these Python code for the test.

As usual, you can be as creative as you want, but your SE must not run for too long and must not take up too much space (e.g., do not generate over 50MB of files). Use the README to tell me exactly how to compile/run your program, e.g., python3 se.py. Again, make sure that your code works on the CSE machine and do not hard code anything specific to your account.

What to Turn In

You must turn in a zip file containing the following files. Use the following names:

- the zip file must be named

pa4-yourlast-firstname.zip - One single main source file

se.ext, whereextis the extension of the source file of the language of your choice (e.g.,.py,.c). As usual, indicate in the README file on how I can run it. - your file should include at least a

my_symbolic_executionfunction and two test functionstest and test2()as indicated above - a

readme.txtfile (IMPORTANT: see the details below, I've made some additional requirement) - 2 screen shots showing how (1) you start your program (e.g., the commands used to compile and run your program) and (2) the end of the run

- Nothing else, do not include

exe,binary file, or anything else.

The readme.txt file should be a plain ASCII text file (not a Word file, not an RTF file, not an HTML file) consisting of the following:

- Comlete run show how you run your program AND the its complete outputs, e.g.,

$ python3 se.py #this should execute both test() and test2

.... #and show me all outputs (something similar to the outputs for the complete example above)

-

Answer the following questions about your SE

-

Briefly describe your SE algorithm (if you use the one from the book or anywhere, briefly summarize)

- What modifications (if any) did you made to make your SE work?

- What do you think the most difficult part of this assignment? (e.g., programming symblic execution, using Z3, etc)

- What advice do you give other students (e.g., students in this class next year) about this PA?

Grading Rubric

Grading (out of 20 points):

- 15 points — for a complete SE as described

- 10 points if your SE works for the given example (i.e.,

test()produces similar results) - 3 points if your SE works for the addition example you created

- 2 points if your SE works on my own example (not provided). This means you should try to generalize your work and test it on various scenarios.

- 5 points — for the answers in the README

- 5 — clear explanations on running the program and thorough answers for the given questions

- 2 — vague or hard to understand; omits important details

- 0 — little to no effort, or submitted an RTF/DOC/PDF file instead of plain TXT